What Is Generative Engine Optimization (GEO): A Complete 2026 Guide

In May 2024, 56% of news-related Google searches resolved without a single click to a website. By May 2025, that number had climbed to 69%, a 13-percentage-point jump in the year following Google’s AI Overviews rollout, according to Similarweb’s zero-click research. If you’re still measuring SEO success primarily by organic clicks, you are measuring a shrinking pool.

The traffic that SEO built over the years is being absorbed by AI answers. This is not only a change in search behavior, but we’re also seeing the entire purchase journey shift.

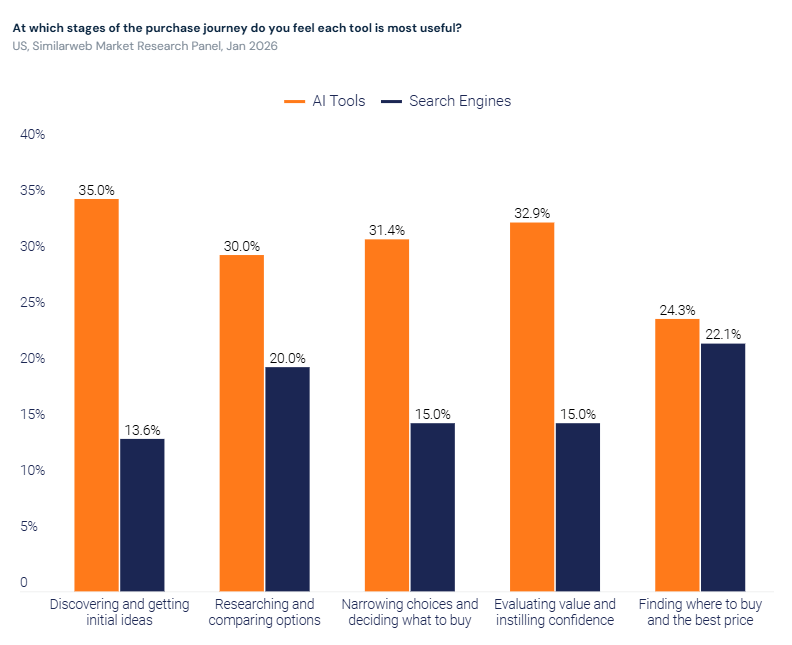

According to Similarweb’s 2026 Generative AI Brand Visibility Index, 35% of US consumers now use AI at the product discovery stage compared to 13.6% who use search. The shortlist is set before a user ever opens a search bar.

When a user asks ChatGPT about the best email marketing tool or prompts Google’s AI Mode for a breakdown of marketing attribution models, the content that informed those answers may be yours. But unless your brand is explicitly cited, you are invisible in that interaction: no click, no impression, no awareness.

That is the gap GEO fills. Not by chasing AI rankings (there are none), but by structuring content and building authority so that AI-powered platforms cite your brand when generating answers.

GEO is not a replacement for SEO. It is the discipline that ensures your brand remains visible inside AI-generated answers as traditional organic clicks decline.

This guide covers what GEO actually is, how it differs from SEO and AEO (answer engine optimization), the eight tactics that move the needle on AI citation, and how to measure whether any of it is working.

Before we get into tactics, one reality check: ChatGPT prompts average around 60 words, compared to 3.4 words for a typical Google search, according to Similarweb’s 2025 GenAI Landscape report.

The user who types into ChatGPT is not the same user who types three words into a search box. They are more specific, more conversational, and significantly more likely to act on whatever the AI tells them. That user’s journey no longer necessarily runs through your website. Being cited in the answer is now the conversion event.

What is generative engine optimization (GEO)?

Generative engine optimization is the practice of structuring content and building brand authority so that AI-powered platforms select, cite, and surface your brand in their responses to user queries. Unlike traditional search, where the goal is a ranked link, GEO targets inclusion inside the synthesized answer itself.

How do generative AI engines actually work?

To optimize for GEO, you need to understand the mechanism behind it: Most modern AI search engines use a process called Retrieval-Augmented Generation (RAG), which works in two stages.

First, retrieval: when a user submits a query, the AI system searches an index to find relevant documents. This index is usually the web, either via a search engine’s existing index (as with Google AI Overviews) or via real-time crawling (as with Perplexity). The system retrieves a set of candidate documents.

Second, generation: a large language model synthesizes those retrieved documents into a single, coherent response. It selects which sources to cite, how prominently to feature them, and what to say about them. The output is not a list of links. It is a composed answer, with your content woven in or left out.

The practical implication of RAG is this: being indexed is necessary, but not sufficient. Your content must also be readable, structurally clear, and authoritative enough for the LLM to consider it worth citing in a given response. That is the GEO layer.

Researchers at Princeton University and IIT Delhi formalized this discipline in a 2024 paper that introduced GEO as a distinct optimization framework. Their benchmark showed that content with added statistics and quotations achieved 30-40% higher visibility in AI-generated responses compared to unmodified content. Keyword stuffing, by contrast, performed below baseline.

The content structure that earns AI citations is fundamentally different from the content structure that chased keyword density.

How can you tell if your GEO is successful?

When GEO is working, your brand achieves four things inside AI-generated answers:

- Citation with source link: the AI references your specific URL as evidence

- Brand mention: your brand name appears in the generated response, with or without a link

- Positive sentiment: when the AI mentions your brand, it does so in a positive or neutral context

- High share of voice: your brand appears consistently across the range of prompts relevant to your category, not just occasionally

Each of these is measurable. None of them shows up in Google Analytics.

GEO vs SEO vs AEO: what’s the difference and why it matters

SEO optimizes for a ranked position in a list of links.

AEO (answer engine optimization) makes your brand the easiest and most trustworthy source for AI-powered systems to extract and cite as a direct answer, across every surface where answers are generated: AI Overviews, featured snippets, People Also Ask boxes, voice assistants, and AI chat platforms.

GEO is the broader discipline that encompasses AEO and extends to share of model, sentiment management, citation authority, and narrative control across the full generative AI ecosystem. All three are now necessary and measurable.

Which main metrics distinguish SEO from AEO and GEO in practice?

- SEO: organic traffic, keyword rankings, click-through rate.

- AEO: mentions, citations, AI Overview appearances, and zero-click impression share.

- GEO: Share of Model, brand mention share, domain influence score, sentiment distribution.

Here is how they compare across the dimensions that matter to a practitioner:

| Dimension | SEO | AEO | GEO |

|---|---|---|---|

| Goal | Rank in a list of links | Be the named, cited answer across all AI answer surfaces | Maximize brand visibility, share of model, and citation authority across the full generative AI ecosystem |

| Primary surface | Google/Bing SERP | AI Overviews, Featured Snippets, PAA boxes, ChatGPT, Perplexity, Gemini, Claude, voice assistants | Same surfaces as AEO, plus deeper narrative control across multi-turn generative responses |

| Key metric | Organic clicks, rank position | Mentions, citations, AI Overview appearances, zero-click impression share | Share of Model, brand mention share, domain influence score, sentiment distribution |

| Content requirement | Keyword relevance, topical authority | BLUF structure, schema markup, clear Q&A format, answer-first paragraphs | Passage-level extractability, stats with primary source citations, semantic entity coverage, multimodal assets |

| Measurement tool | Search Intelligence, GSC | Rank Tracker (SERP features), AI Brand Visibility | AI Brand Visibility, AI Traffic |

| Competitive dynamic | 10 links compete on a page | 1-3 sources dominate the extracted answer | 2-7 sources cited per generative response; brand narrative shapes the full answer |

Why all three now coexist

The claim that “GEO replaces SEO” is wrong, and practitioners who act on it will damage their programs.

Here is why: Google’s AI Mode still draws exclusively from Google’s search index. If your page does not rank in Google, it will not be cited in Google AI Mode. The same principle applies to Bing AI and Copilot. Traditional rankings remain the entry fee to AI citation in the largest search engines.

The correct mental model is a stack.

SEO builds the foundation: indexation, authority, rankings. Without it, neither AEO nor GEO has anything to work with, since Google AI Mode and Bing AI draw exclusively from their respective search indexes.

AEO focuses that foundation into answer extraction: being cited as the direct answer across SERP features, AI Overviews, and AI chat.

GEO is the broader discipline above both: it encompasses AEO and extends to the share of model, sentiment management, and narrative control across the full generative AI ecosystem, including platforms where users go directly without starting with a search engine.

How to optimize for GEO: 8 tactics that actually move the needle

To be cited in AI-generated answers, your content must clear three bars simultaneously: it must be technically accessible to AI crawlers, structured for passage-level retrieval, and authoritative enough that LLMs consider it a credible source.

The eight tactics below address all three layers.

Each one stands alone. Implement the ones most relevant to your current gaps.

1. Make sure AI crawlers can actually read your site

The first GEO failure mode is invisible: blocking AI bots entirely. Cloudflare changed its default configuration in 2024 to block AI crawlers, which means any site using Cloudflare’s default settings may have quietly locked out every major AI platform’s crawling agent.

Check your robots.txt for GPTBot, PerplexityBot, ClaudeBot, and Google-Extended. If they are disallowed, you do not exist on those platforms.

Check your server logs for these user agents to confirm crawl activity. Beyond permissions, ensure important content is server-side rendered rather than relying on JavaScript execution: many AI crawlers do not execute JavaScript, so client-rendered content is functionally invisible to them.

2. Open every section with a standalone answer (BLUF structure)

AI engines retrieve passages, not pages. When an LLM searches for a source to cite for “what is GEO,” it evaluates whether a specific paragraph can stand alone as a useful answer, without requiring the reader to understand the surrounding context.

A section that buries its answer in the third paragraph will lose citation share to a section that opens with a 30-60 word direct answer.

This structure is called BLUF (Bottom Line Up Front). Apply it to every H2 section: state the direct answer first, then expand. The first sentence of every major section should be extractable as a standalone citation. If it requires reading the section above to make sense, rewrite it.

3. Add statistics, primary source citations, and named frameworks

Content with verifiable statistics and named citations achieves 30-40% higher AI visibility than unoptimized content, according to Princeton’s research. This is the single most empirically validated GEO tactic.

Every quantified claim should be grounded by data: number/population/timeframe + source. “AI chatbot referral traffic grew 357% year over year, reaching 1.1 billion referral visits in June 2025, according to Similarweb’s 2025 Generative AI report” is citable. “AI traffic is growing rapidly” is not.

Link statistics directly to their primary sources: the original paper, the official report, the company’s own research page. A blog post summarizing a study is not an acceptable substitute for linking to the study itself.

4. Cover the full fan-out query space for your topic

When a user submits a complex query to ChatGPT or Perplexity, the AI does not search for that exact phrase. It decomposes the query into multiple parallel sub-queries and retrieves content for each independently.

This is called query fan-out, and it is one of the most important architectural realities of GEO.

Fan-out sub-queries are non-deterministic: the specific sub-query text varies significantly across repeated runs of the same anchor query. That means you cannot optimize for specific sub-query wording.

What you can do is ensure your article covers all seven question categories: definition, comparison, how-to, use case, objection, entity expansion, and metric. A well-structured long-form article that covers all seven as independently citable sections will earn citation share across the full fan-out space for a given anchor query.

5. Build brand presence in third-party sources and directories

Yext research shows that 86% of citations in AI-generated answers come from brand-controlled or brand-adjacent sources, including your own website, your profiles in directories and review platforms, and your brand mentions in high-authority publications. Your owned content is your primary citation asset.

A Seer Interactive study found a 65% correlation between the frequency of brand mentions in web content and in AI-generated responses. The practical implication: building a digital PR footprint, earning mentions in high-authority publications, and maintaining accurate profiles in directories like G2 and Wikipedia are not separate from GEO. They are GEO.

6. Implement llms.txt for AI discoverability

llms.txt is an emerging convention, modeled loosely on robots.txt, that allows sites to indicate which pages they consider authoritative for AI engines to use as sources.

It is not a verified standard or a direct ranking signal in any confirmed algorithm, but it serves two practical purposes: it reduces token waste (by having AI agents fetch clean Markdown files rather than JavaScript-heavy HTML) and signals topical authority to AI systems that respect the convention.

For sites with complex architectures or large content libraries, llms.txt provides a map to your most defensible, citation-worthy pages. It claims to support GEO by giving AI systems a clear path to your best content.

7. Execute digital PR with AI citation surfaces in mind

Traditional digital PR (earning brand mentions and backlinks from high-authority publications) remains a core GEO tactic because LLMs are trained on and retrieve from the same high-authority sources that dominate traditional search.

A brand mentioned consistently in Forbes, Newsweek, and industry-specific publications will be recognized by LLMs as an authority in that space.

The GEO-specific addition: prioritize publications that AI platforms are known to cite.

Platforms like Perplexity, which visibly cite sources in every response, show a clear preference for authoritative industry publications, official documentation, and primary research. Pitching guest content, contributing data to reports, and securing inclusion in “best of” roundups on high-authority domains are direct GEO investments, not just SEO investments.

8. Seed content across the platforms LLMs pull from

AI engines draw from sources beyond traditional web pages. Reddit, LinkedIn, YouTube, G2, and Quora are frequently cited in AI-generated responses because they represent high-signal user-generated content. A brand that is discussed positively and accurately on these platforms has a larger citation surface area than one that only maintains its own site.

This is what LLM seeding means: systematically distributing your brand’s narrative, data points, and key claims across the platforms that generative engines index heavily.

It is not spam. It is presence.

The difference is whether the content you distribute provides genuine value on the platform where it lives.

Platform differences: how ChatGPT, Perplexity, Gemini, and Google AI select their sources

Not all AI engines cite content the same way. Optimizing for GEO without understanding platform-specific citation mechanics means leaving share of voice on the table in every platform you are not accounting for.

Here is how the major platforms differ:

| Platform | Source mechanism | Index used | Citation visibility | Recency weight | Primary optimization lever |

|---|---|---|---|---|---|

| ChatGPT (search mode) | Real-time web retrieval + direct crawl | Bing index + Google SERP + OpenAI direct | Inline citations in search mode | High | SEO + AI bot access + BLUF structure |

| Perplexity | Always-on real-time retrieval | Own index, multiple sources | Always visible; high citation density | Very high | Technical crawlability + passage clarity + fresh content |

| Google AI Overviews / AI Mode | Google’s search index exclusively | Google’s own index | Embedded in the overview card | Medium | SEO fundamentals + E-E-A-T + structured data |

| Claude | Curated, high-authority sources | Training data + selective web | Selective; fewer citations | Medium | Domain authority + authoritative sourcing + llms.txt |

| Gemini | Google’s search index + Bard training | Google index | Source cards visible | Medium | Google SEO + entity optimization |

The practical takeaway:

- Google AI Overviews and AI Mode are, at their core, a Google SEO problem.

- Microsoft’s official guidelines for generative search align with this architecture: index-first for Bing-powered platforms, technical crawlability for real-time retrieval platforms. If you rank in Google, you are eligible.

- Perplexity and ChatGPT in search mode require a separate layer of attention to technical crawlability, real-time indexation, and content freshness.

- Claude and Gemini fall somewhere between, with Claude being particularly selective about what it considers authoritative enough to cite.

What metrics and KPIs do you need to track for measuring GEO?

Traditional SEO tools do not measure GEO performance. A brand can be the most-cited source in ChatGPT for its entire product category and register zero activity in Google Analytics.

This is the measurement blind spot: AI-driven discovery creates influence, consideration, and brand preference that standard analytics dashboards miss entirely.

The conversion path in AI search often looks like this: a user asks ChatGPT which marketing analytics platform to use, ChatGPT mentions your brand favorably, the user Googles your brand name two days later, and converts via a branded search.

Your attribution model credits the branded search. The AI mention that initiated the journey is invisible.

Measuring GEO requires purpose-built AI visibility tracking.

1. Brand visibility score

The percentage of AI-generated responses, across a defined set of relevant prompts, that include your brand. This is the headline GEO metric.

The underlying formula is Share of Model (SoM): your brand mentions divided by the total number of AI answers in the tracked set. A brand visibility score of 30% means your brand appears in 3 out of 10 AI responses to your target query cluster.

2. Brand mention share

Your brand’s proportion of all brand mentions across AI responses in your category. If you are in email marketing, this measures how often your brand appears relative to every other named brand across all AI answers in that space.

Think of it as Gen AI’s share of voice.

3. Topical visibility

Your brand visibility score broken down by topic cluster, benchmarked against competitors. This is where the actionable gaps live. A brand with 25% overall visibility but 4% in its highest-value topic has a topic gap, not a general visibility problem.

4. Prompt-level visibility

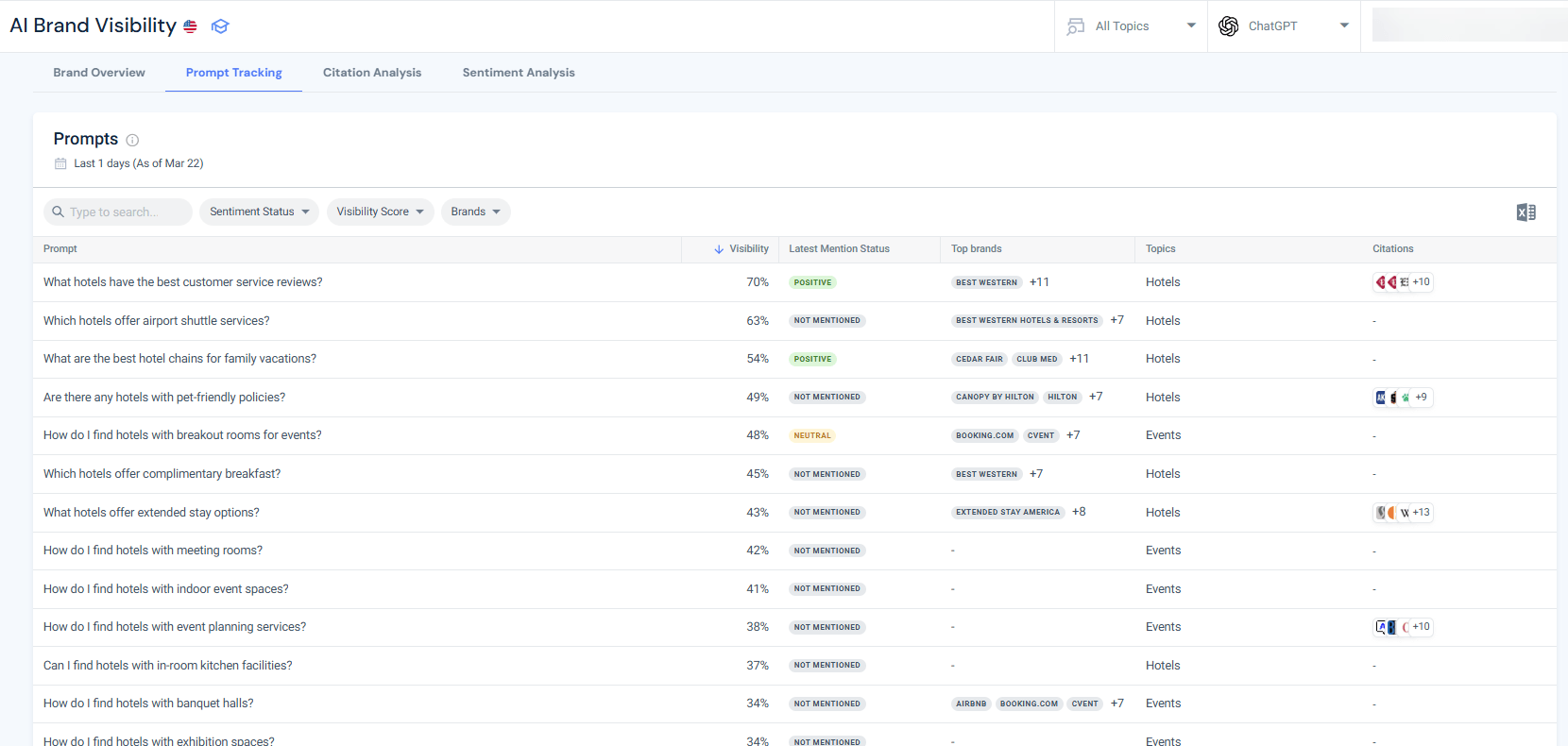

Your brand’s presence at the individual-prompt level across the full query set you track. A brand with strong topical visibility but zero presence on 80% of its priority prompts has a content coverage problem, not a GEO execution problem. The Prompt Analysis tool surfaces exactly which prompts your brand wins and which it does not.

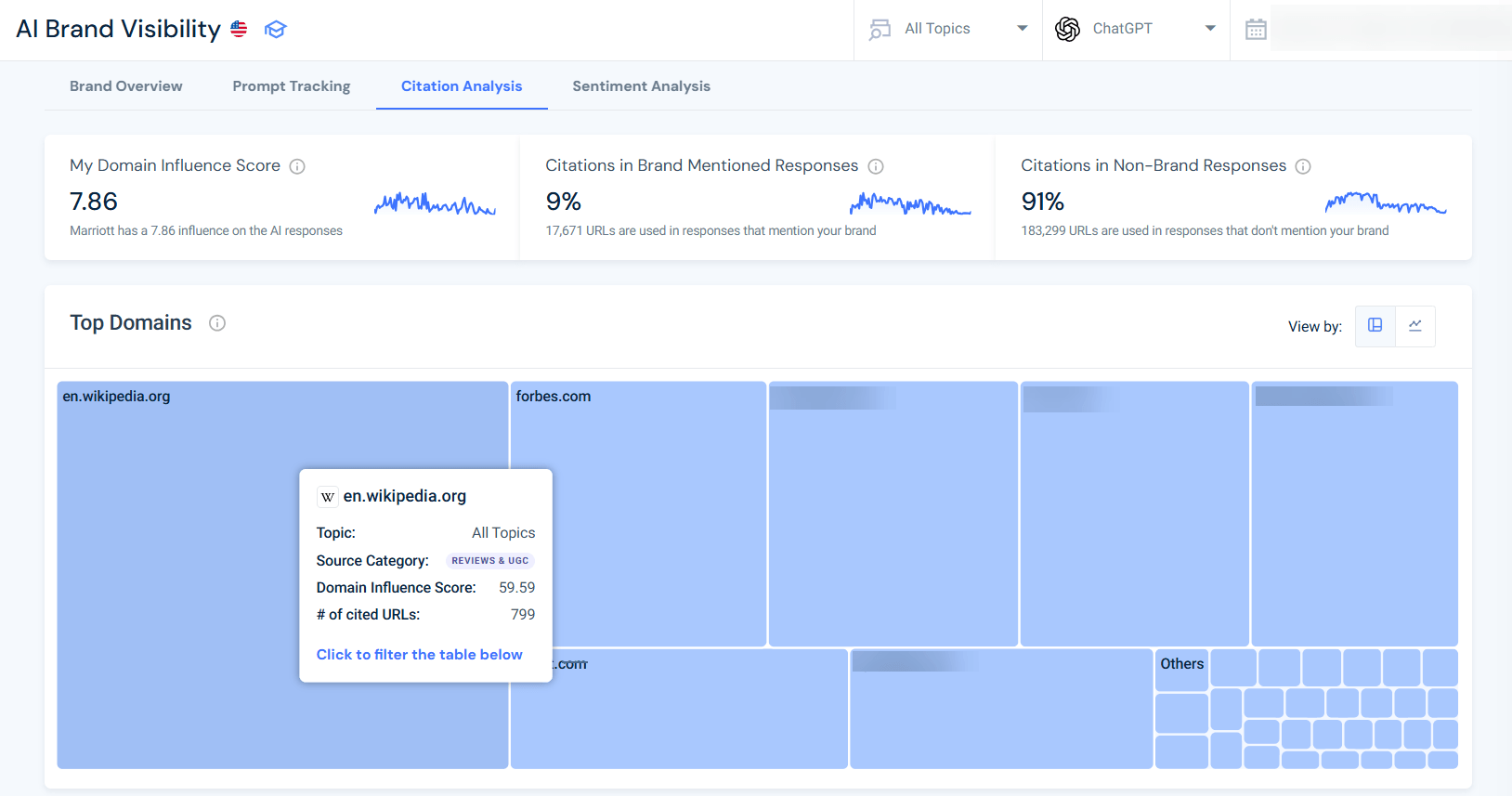

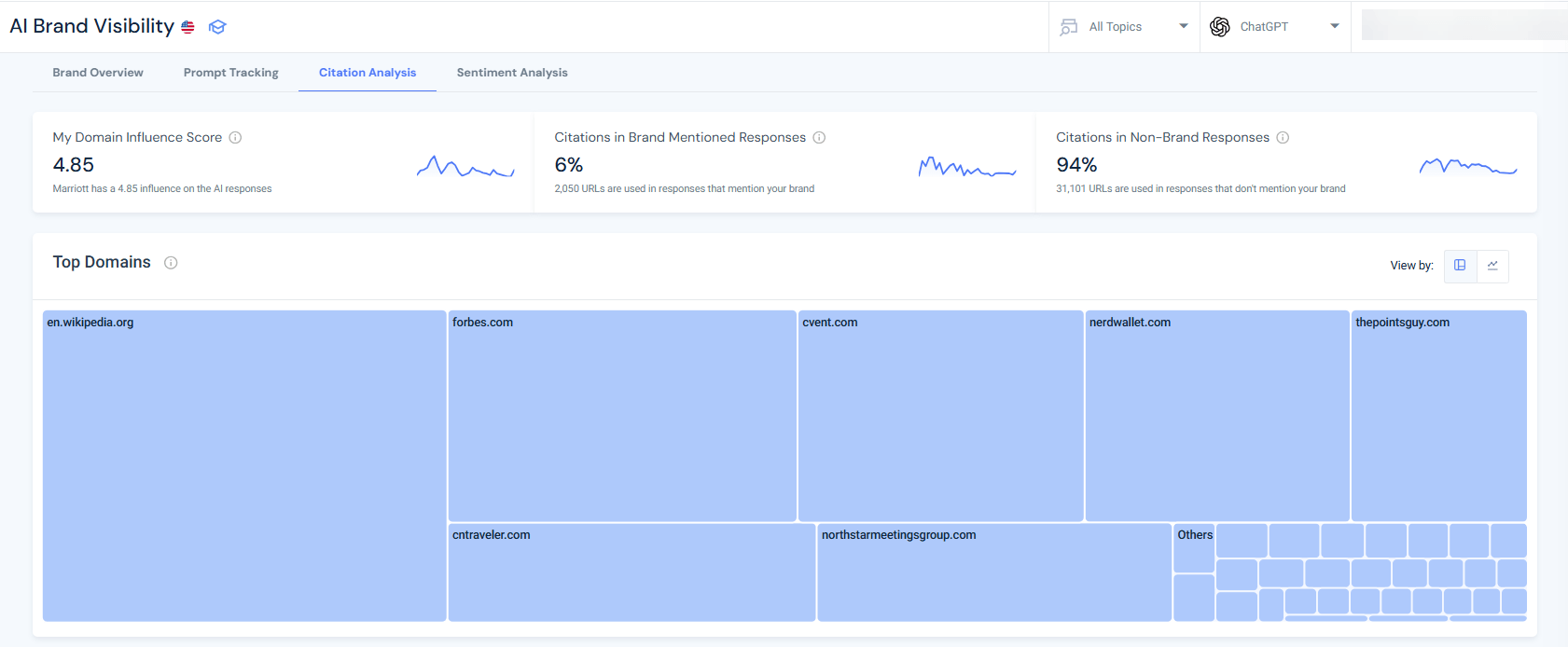

5. Domain influence and citation share

How often your domain is cited as a source in AI answers, relative to all domains cited in responses mentioning your brand. Mentions without citations are influence, and citations with source links are authority.

A low domain influence score means AI engines mention your brand but pull their evidence from third-party sources, not your own content.

6. Sentiment distribution

The breakdown of AI-generated mentions of your brand into positive, neutral, and negative, by topic and against competitors. Sentiment matters because AI engines in recommendation mode make active judgments about brand suitability. A brand with consistently neutral AI sentiment across commercial prompts has work to do.

Note: If you have negative sentiment, you might need to look a little deeper at how your content is structured and how your brand is presented in AI engines. Read my guide for fixing negative sentiment in AI if you spot this issue.

7. AI traffic and conversion (optional)

The volume of referral visits from AI platforms to your site, and how those visits convert. AI-sourced traffic is currently small for most brands, but it converts at measurably higher rates because users arrive with strong purchase intent.

Tracking it via Similarweb’s AI Traffic Tracker connects your GEO metrics to actual business impact.

Citation volatility: why GEO measurement must be continuous

A 2025 Research by AirOps, based on 45,000 citations, found that only 30% of brands stay visible from one AI answer to the next, and just 20% remain present across five consecutive runs of the same query.

That means the AI citation share is highly volatile: models rebalance to prioritize diversity, freshness, and coverage, and rebuild answers from scratch each time. A brand visible in Monday’s AI response may be absent in Tuesday’s.

This is not a reason to dismiss GEO. It is a reason to measure frequently. A brand with strong topical authority, regularly updated content, and broad platform presence will show higher average citation rates even in a volatile environment.

The volatility creates opportunity for brands willing to invest in consistent, well-structured, authoritative content across the full fan-out query space.

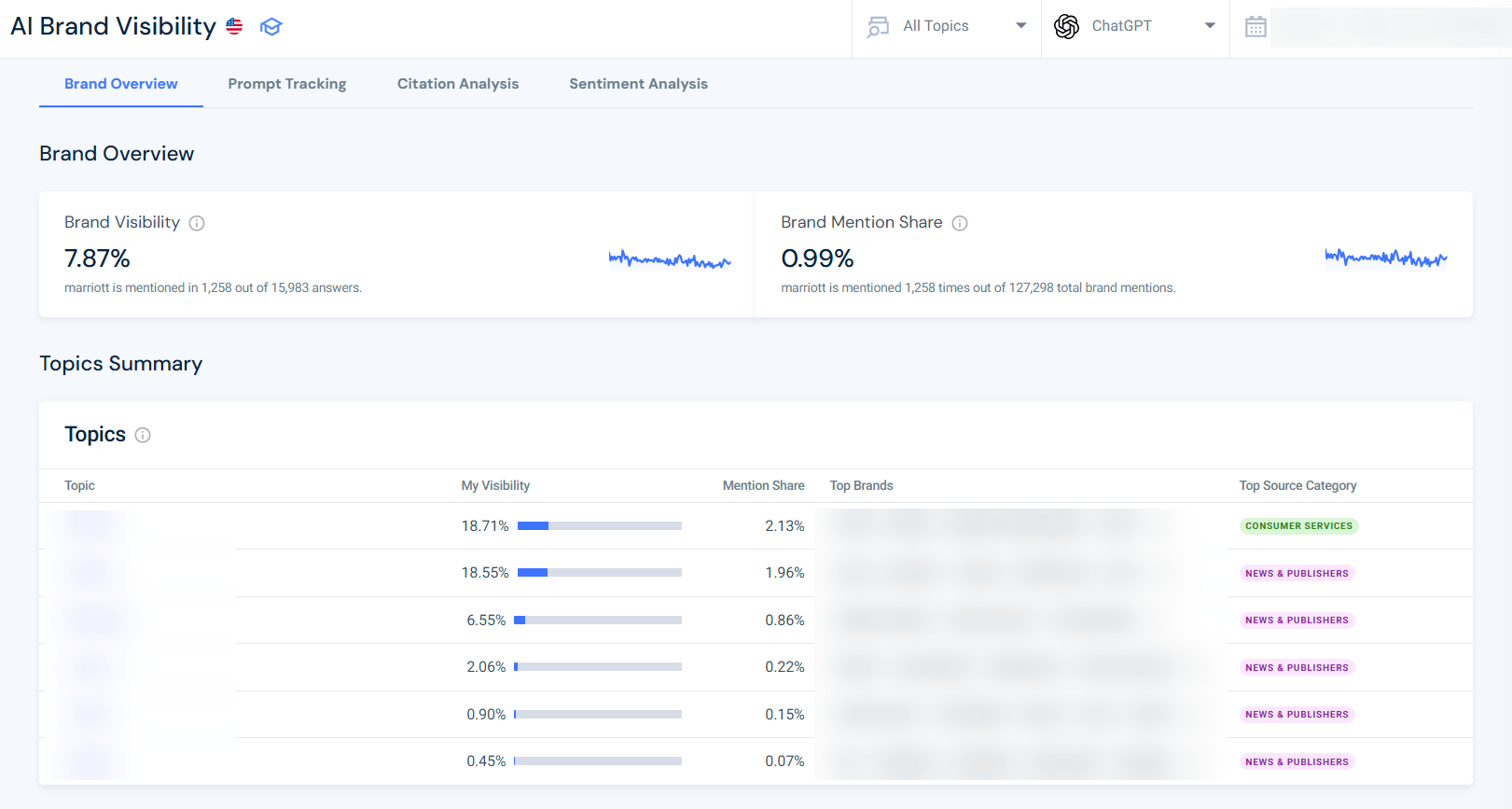

How Similarweb measures GEO performance: a real example

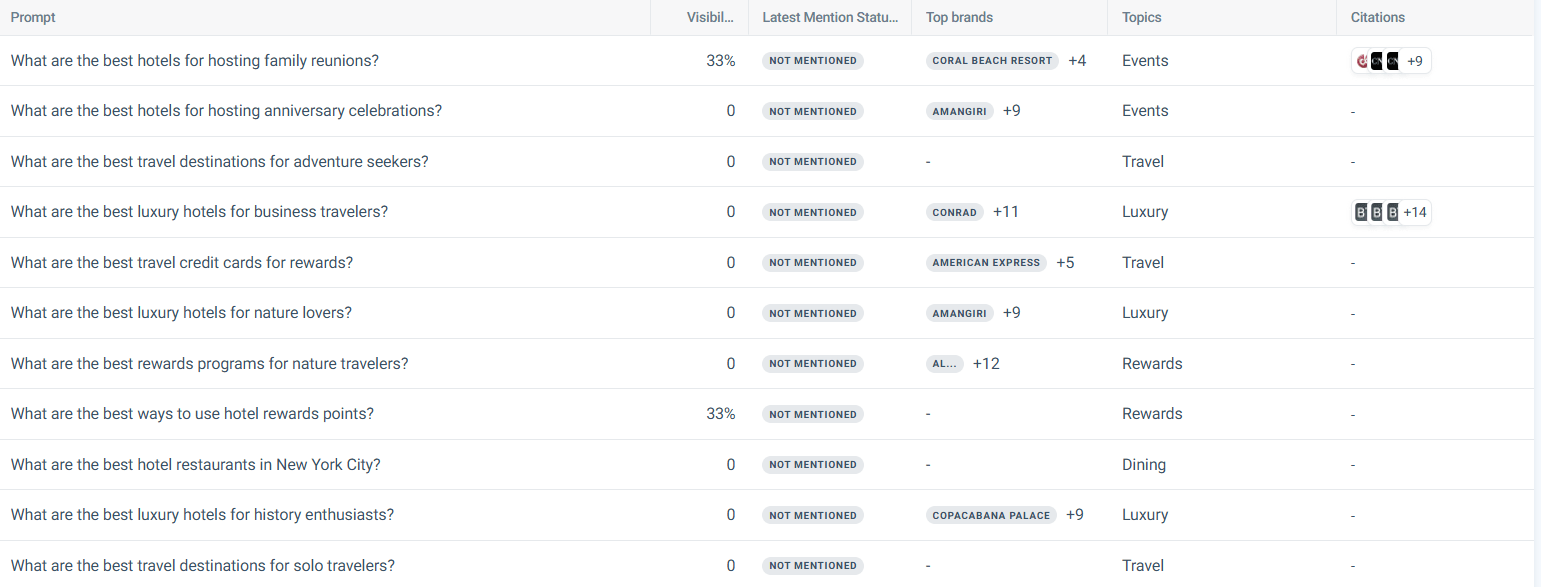

Abstract KPI frameworks are useful. Real data is better. Here is what GEO measurement actually looks like using Similarweb’s AI Search Intelligence toolkit, the same toolset behind the 2026 Generative AI Brand Visibility Index, applied to a travel brand tracking 180 AI-generated responses across six topic clusters: Travel, Hotels, Luxury, Rewards, Events, and Dining.

The headline finding: 92.8% invisible.

Of 180 relevant prompts, the brand appeared in AI-generated answers just 13 times: a Share of Model of 7.2%. For queries covering exactly the topics this brand should own (luxury hotels, business travel, rewards programs, hotel dining), the AI response simply did not exist. No mention. No citation. No consideration.

That 7.2% is the number GEO exists to move.

Who is winning instead?

The brands dominating AI responses for travel queries are Expedia (appearing in 36 responses), Booking.com (33), The Ritz-Carlton (24), Marriott Bonvoy (21), and Hotels.com (19). These are not winning because of superior SEO. They are winning because AI engines pull from the same third-party aggregators and list those brands that appear consistently.

The citation source data confirms this:

The domains AI engines cited most across those 180 prompts were:

- Cvent (22 citations, primarily their annual Top Meeting Hotels rankings)

- Wikipedia (11)

- TripAdvisor (9)

- Kiplinger (7)

- NerdWallet (5).

Not a single citation went to a hotel brand’s own homepage.

The AI is reading the web the way a researcher does, it trusts the review platforms, the industry rankings, and the financial comparison sites, not the brand’s own marketing copy.

For more instructions on how to analyze your brand citations, see the full AI citation analysis guide.

What do the positive mentions reveal?

The four prompts where the brand appeared with positive sentiment were all tied to being on a structured third-party list. The prompt “What are the best hotels for hosting networking events?” triggered positive sentiment because Cvent had published its annual Top Meeting Hotels rankings on BusinessWire, and the AI cited that press release directly.

The brand appeared in AI answers not because of anything on its own website, but because it was ranked on an authoritative industry list that AI engines treat as a credible source.

This is Tactic 5 in action: build brand presence in third-party sources and directories. The brands that disappear from AI responses are those that publish only on their own sites.

Where are the gaps?

The not-mentioned prompts include “best luxury hotels for business travelers,” “best rewards programs for adventure travelers,” and “best travel apps for booking accommodations”, high-intent, high-commercial-value queries.

A user asking any of these questions is close to a booking decision. The brand’s absence from those AI responses is not a visibility problem. It is a revenue problem.

To configure this kind of measurement in Similarweb’s AI Brand Visibility module: set up a campaign with your domain, select your relevant topic clusters, and run it against ChatGPT, Gemini, Perplexity, and Google AI Mode. The dashboard surfaces exactly which prompts trigger mentions, which trigger citations, and which return nothing, turning the measurement blind spot into a prioritized action list.

The TRACE framework: a four-step GEO diagnosis

The travel brand example above is not just illustrative. It is a diagnostic workflow. Run it against any brand, and you get a prioritized GEO action list in four steps:

T: Track.

Use Similarweb AI Brand Visibility to establish your baseline Share of Model. Configure a campaign for your domain, select the topic clusters your customers care about (not SEO topics, the actual questions they ask AI: “best hotels for business travel,” “top rewards programs for families,” “which CRM for a 50-person team”), and run it across ChatGPT, Gemini, Perplexity, and Google AI Mode. Record your Share of Model and sentiment breakdown.

R: Reveal

Sort and analyze prompts by sentiment. The not-mentioned group is your gap list. These are the queries that AI is actively answering for your customers without you in the room. For the travel brand, that included “best luxury hotels for business travelers” and “best rewards programs for adventure travelers”, both prompts a prospect uses minutes before making a booking decision.

A: Audit

For each not-mentioned prompt, check what sources AI *is* citing. This is the most actionable step. If Cvent’s Top Meeting Hotels list appears in 22 citations and you are not on it, that is your highest-leverage GEO action, not a new blog post.

The citation source pattern tells you exactly which third-party authorities AI trusts in your category.

C: Close

Act on the authority gap, not the content gap. Get onto the lists, rankings, and directories that AI engines already cite. Earn the press release on BusinessWire. Update the Wikipedia entry. Pitch the NerdWallet comparison.

These are not PR vanity metrics. They are direct inputs to your Share of Model.

E: Evaluate

Re-run the same prompt set monthly. Share of Model is not a one-time audit. AI engines constantly rebalance their citation sources. A brand that gained visibility in January may lose it in February if a competitor secures a new Cvent ranking or an inclusion in a Forbes roundup. The evaluation cadence is what turns a one-time audit into a GEO program.

GEO prompt coverage tracker template

Use this template to run the TRACE diagnostic against your own brand. Fill in one row per tracked prompt. The structure mirrors what Similarweb’s AI Brand Visibility campaign dashboard surfaces.

Copy the full template, including instructions, here

| Prompt | Topic cluster | LLM | Sentiment | Competing brands cited | Sources AI cited | Gap type | Priority action |

|---|---|---|---|---|---|---|---|

| “Best [your category] for [use case]” | [cluster] | ChatGPT | Not mentioned | Brand A, Brand B | TripAdvisor, Cvent | Third-party authority gap | Get listed on [source] |

| “Top [your category] with [feature]” | [cluster] | Perplexity | Neutral | Brand A | Wikipedia, Forbes | Sentiment gap | Publish data-backed content on [feature] |

| “How do I find [your category] that [outcome]” | [cluster] | ChatGPT | Not mentioned | None | Reddit, G2 | UGC gap | Seed [platform] with accurate brand presence |

| “Best rewards program for [persona]” | [cluster] | Gemini | Not mentioned | Brand A, Brand C | NerdWallet, Kiplinger | Financial media gap | Pitch comparison inclusion to [publication] |

Gap type definitions:

- Third-party authority gap: AI cites a ranking or directory list you are not on

- Sentiment gap: AI mentions your brand, but without a clear recommendation

- UGC gap: AI pulls from community platforms (Reddit, G2, Quora) where your brand lacks presence

- Financial media gap: AI cites comparison/review publications that do not include you

- Content gap: AI cites a question-specific content type (how-to, checklist) you have not published

Run this tracker monthly. Share of Model is the headline number, and the Gap type column is where the action lives. For your content optimization, adapt your keyword research for GEO.

Copy the full template, including instructions, here

The GEO audit: where to start

If you are not yet measuring GEO and want a practical starting point, run a GEO audit against your current content. A GEO audit assesses: AI crawl accessibility, BLUF structure compliance across your top pages, fan-out query coverage, third-party mention footprint, and baseline citation frequency in the AI platforms most relevant to your business.

The output is a prioritized list of fixes, not a new content strategy from scratch.

What are the best GEO tools for 2026?

How to choose your GEO toolkit

GEO requires a different toolset from SEO. Most traditional rank trackers and analytics platforms were not built to measure AI visibility, and several vendors have added surface-level “AI features” to existing products without rebuilding the underlying data model. Before choosing tools, apply four criteria:

- Does it measure actual AI engine behavior rather than just Google SERP features? An AEO tool tracks AI Overviews. A GEO tool must track ChatGPT, Perplexity, Gemini, and Google AI Mode independently.

- Does it connect visibility to traffic? Brand mentions in AI answers are only valuable if you can tie them to referral visits and conversion data. Most point solutions stop at visibility.

- Is it backed by independent data? Some tools run prompts manually or rely on self-reported inputs. Independent, panel-based data at scale produces more reliable benchmarks.

- Does it cover competitive benchmarking? Knowing your own Share of the model is useful. Knowing it relative to your top three competitors is actionable.

The top GEO tools for 2026

Similarweb AI Search Intelligence

The most comprehensive GEO toolset available. Similarweb is the only solution that combines AI Brand Visibility, AI Traffic analytics, Prompt Analysis, Citation Analysis, and Sentiment Analysis in a single suite, all backed by independent panel-based data covering the full web rather than self-reported or sampled inputs.

It tracks brand mention share, topical visibility, domain influence, sentiment distribution, and prompt-level coverage across ChatGPT, Gemini, Perplexity, and Google AI Mode simultaneously. The AI Traffic module closes the loop by connecting visibility signals to actual referral visits and page-level conversion data, something no other tool in this category does.

For AEO, the Rank Tracker has a SERP Features dashboard that tracks AI Overview appearances, featured snippet wins, and PAA coverage alongside standard rankings, making it the only tool that covers the full SEO-AEO-GEO stack on a single platform.

Best for: brands that need end-to-end GEO measurement, competitive benchmarking, and traffic attribution in one place.

Profound

A dedicated AI visibility tracker that monitors brand mentions across ChatGPT, Perplexity, Claude, and Gemini. Profound runs structured prompt sets against multiple LLMs and returns brand visibility scores, competitor share, and source attribution. It is strong on prompt coverage and multi-model tracking, and has a clean interface suited to GEO reporting workflows.

The gap: no traffic attribution, no integration with traditional SEO metrics, and no independent web data, so visibility findings cannot be connected to downstream business impact.

Best for: teams that want a focused AI visibility monitor and are comfortable using a separate tool for web traffic and SEO data.

Otterly.ai

Designed specifically for tracking brand visibility in AI-generated answers, with a prompt management interface that lets users build and run structured prompt sets across multiple models. Good for prompt-level gap analysis and share-of-voice tracking across a defined query set.

The gap: limited depth of citation analysis, no traffic data, and no topical benchmarking against competitors at scale.

Best for: content teams running prompt-level audits on a defined topic cluster.

BrightEdge

An enterprise SEO platform that added AI Overview tracking and some generative AI visibility features to its existing rank and content intelligence suite. Strong on traditional SEO metrics and content optimization workflows, and the AI Overview integration is useful for AEO measurement.

The gap: coverage is weighted toward Google surfaces. ChatGPT and Perplexity visibility are not measured at the same depth as Google AI Overviews, and there is no AI traffic attribution.

Best for: enterprise teams already on BrightEdge that want to extend existing SEO workflows into AEO without switching platforms.

Ahrefs Brand Radar

A well-established SEO toolset that added AI Overview visibility tracking to its rank tracker in 2025. Useful for monitoring which keywords trigger AI Overviews and whether your pages appear in them, which makes it a solid AEO tool for Google-specific surfaces.

The gap: no ChatGPT, Perplexity, or Gemini tracking; no brand-mention share, no citation analysis, and no AI traffic data. It is an SEO and AEO tool, not a GEO tool.

Best for: teams using Ahrefs for SEO who want basic AI Overview tracking without a separate platform investment.

What GEO looks like in 2026: the agentic frontier

Most GEO content focuses on conversational AI (ChatGPT, Perplexity) and AI-enhanced search (Google AI Mode, Bing AI). These are important surfaces. But the next phase of GEO is already active in early form: agentic AI.

AI agents are systems that do not just answer questions. They take actions on behalf of users: shopping, comparing vendors, booking appointments, researching purchases. When a user deploys an AI agent to find the best CRM for a growing SaaS company, the agent shows no results. It synthesizes a recommendation and, in some implementations, initiates a trial signup or an outreach sequence on the user’s behalf.

The citation economics of agentic AI are even more constrained than conversational AI. A conversational model might cite 5-7 sources. An agent acting on a purchase decision will likely converge on one or two.

The brand that is authoritative, consistently mentioned, and well-represented in the sources agents pull from will win that shortlist. The brand that lacks a structured presence in the agentic AI information layer will not appear.

Optimizing for agentic GEO is not fundamentally different from what has been described above: structured content, authoritative sourcing, technical accessibility, and broad platform presence.

The stakes are just higher, and the window to establish citation authority before the space matures is shorter.

The most concrete technical development on this front is WebMCP, a browser-native standard jointly developed by Google and Microsoft that lets websites expose structured, callable tools to AI agents directly.

Instead of an agent guessing how to use your site by parsing HTML or taking screenshots, a WebMCP-enabled site tells the agent exactly what actions are available and what they return. For GEO, this matters because it removes the crawlability bottleneck entirely: an agent does not need to retrieve and interpret your content if your site can answer its query directly.

It is still early, but the sites building WebMCP implementations now are establishing agent-layer authority before the space has any real competition.

Conclusion: GEO is not optional, it is the new entry fee

Every major shift in SEO has followed the same pattern. The transition from pre-social SEO to link-building dominance did not happen overnight. Neither did the shift from exact-match keywords to semantic search. What happened was that the brands that recognized the shift early and built the right infrastructure accumulated compounding advantages that were very hard to close later.

GEO is that shift. The zero-click rate for news searches, which grew from 56% to 69% in a single year, is not noise. It is a structural change in how information flows from content to the user. The AI chatbot referral traffic that reached 1.1 billion visits in June 2025 and grew 357% year over year, per Similarweb’s 2025 Generative AI report, is not a trend. It is a new channel, and it is scaling faster than search itself did in its early years. Updated AI stats keep pouring in every month, and they all point in the same direction.

The brands that build citation authority now, through BLUF-structured content, primary-source data integration, broad platform presence, and systematic measurement, will own the AI answer layer in their categories. The brands that wait until GEO has a fully mature playbook will find the field occupied.

Traditional SEO is the foundation. AEO extends your visibility to zero-click answer surfaces. GEO is everything beyond being cited, mentioned, and recommended in AI responses, as more users now start and end their research there. All three disciplines reinforce each other.

None is optional if organic visibility matters to your business.

Use Similarweb’s AI Search Intelligence toolkit to measure where your brand stands today: which AI platforms drive visits, which prompts mention your brand, and where competitors are earning citation share you are not. Then use that data to prioritize your GEO investments. If you want a structured starting point, the GEO audit will tell you where the gaps are.

The answer layer is being built right now. You should be in it.

FAQ

What is generative engine optimization (GEO)?

Generative engine optimization (GEO) is the practice of structuring content and building brand authority so that AI-powered platforms, including ChatGPT, Perplexity, Google AI Overviews, Gemini, and Claude, select, cite, and surface your brand in their responses to user queries. It focuses on being cited inside the synthesized answer, not merely ranked in a list of links. GEO works alongside SEO and AEO, not as a replacement for either.

How is GEO different from SEO?

SEO optimizes for rankings on traditional search engine results pages, with organic clicks as the primary success metric. GEO optimizes for citation and mention frequency inside AI-generated responses, with Share of Model and citation frequency as the primary metrics. The core techniques overlap significantly (quality content, technical accessibility, authoritative sourcing), but GEO adds specific requirements: BLUF-structured sections, statistics with primary source citations, cross-platform brand presence, and dedicated measurement of AI-specific performance signals that traditional tools do not capture.

What is the difference between GEO and AEO?

AEO (answer engine optimization) is the practice of making your brand the easiest source for AI-powered systems to extract and cite as a direct answer. It spans all answer surfaces: AI Overviews, featured snippets, People Also Ask boxes, voice assistants, and AI chat platforms, including ChatGPT, Perplexity, Gemini, and Claude. GEO is the broader discipline that encompasses AEO and extends further to include share of model, sentiment management, narrative control, and citation authority across the full generative AI ecosystem.

AEO asks, “Are we being cited as the answer?” GEO asks, “how visible, how trusted, and how favorably represented is our brand across every AI surface?” For a full side-by-side breakdown, see our AEO vs GEO comparison.

How do I measure GEO performance, and what is Share of Model?

Share of Model (SoM) is the percentage of AI-generated responses, across a defined query set relevant to your category, that include your brand. It is the headline GEO metric. Beyond SoM, effective GEO measurement tracks brand mention share, topical visibility, prompt-level visibility, domain influence and citation share, sentiment distribution, and AI traffic and conversion.

Standard web analytics tools do not capture these signals.

Similarweb’s AI Search Intelligence toolkit provides AI Brand Visibility tracking across ChatGPT, Gemini, Perplexity, and Google AI Mode, with campaign-level configuration by topic, region, and brand.

Does GEO replace SEO?

No. GEO does not replace SEO, for a straightforward reason: the largest AI-powered search engines (Google AI Overviews, Google AI Mode, Bing AI, Copilot) draw from their respective search indexes. A page that does not rank in Google cannot be cited in Google AI Mode. Traditional SEO rankings remain the entry ticket to AI citation on the platforms with the largest user bases. GEO is additive: it extends your visibility into the conversational AI platforms (ChatGPT, Perplexity, Claude) where users go directly, without starting in a search engine.

How do I get my brand cited by ChatGPT and Perplexity?

To increase citation frequency in ChatGPT and Perplexity, focus on: (1) confirming that your site is not blocking GPTBot or PerplexityBot in robots.txt, (2) structuring content with BLUF openers so each section is independently extractable, (3) adding statistics with primary source citations, (4) covering the full fan-out query space for your topic rather than a single keyword, and (5) building brand presence on the third-party platforms these AI engines cite.

Perplexity is especially sensitive to content freshness, so maintaining updated publication and revision dates on cornerstone content is a practical lever.

How long does it take to see results from GEO?

Initial traction is possible within 4-8 weeks for well-optimized content on an authoritative domain. Building consistent, stable AI visibility across platforms typically takes 3-6 months of sustained effort. Unlike SEO, where rankings can persist for years, AI citation patterns are highly volatile, a 30-day measurement cadence is the minimum reliable baseline for tracking progress.

Will GEO reduce my website traffic?

It can, and that is partly the point. AI engines are designed to resolve queries without a click, which means a brand appearing in AI answers may drive fewer direct visits than a top organic ranking would. The trade-off is influence at an earlier, higher-intent stage of the purchase journey. Similarweb data shows AI-referred traffic converts at 7% versus 5% from Google referrals, so the sessions that do arrive are more valuable.

Is GEO the same as LLMO, AIO, or GSO?

Yes, they all describe the same discipline. Large Language Model Optimization (LLMO), AI Optimization (AIO), Generative Search Optimization (GSO), and AI Search Optimization are all industry terms for optimizing content for inclusion in AI-generated answers. GEO is the most widely used term, credited to the 2024 Princeton research paper that formalized the practice. The terminology varies by vendor and region, but the underlying mechanics are identical.

Wondering what Similarweb can do for your business?

Give it a try or talk to our insights team — don’t worry, it’s free!